PowerProtect Data Manager 19.12 is now out, and in this post I’ll take you through some of the new features…

NetWorker 19.3 introduced the option to redeploy VMware image-based backup proxies via the NWUI interface. Previously that functionality had been…

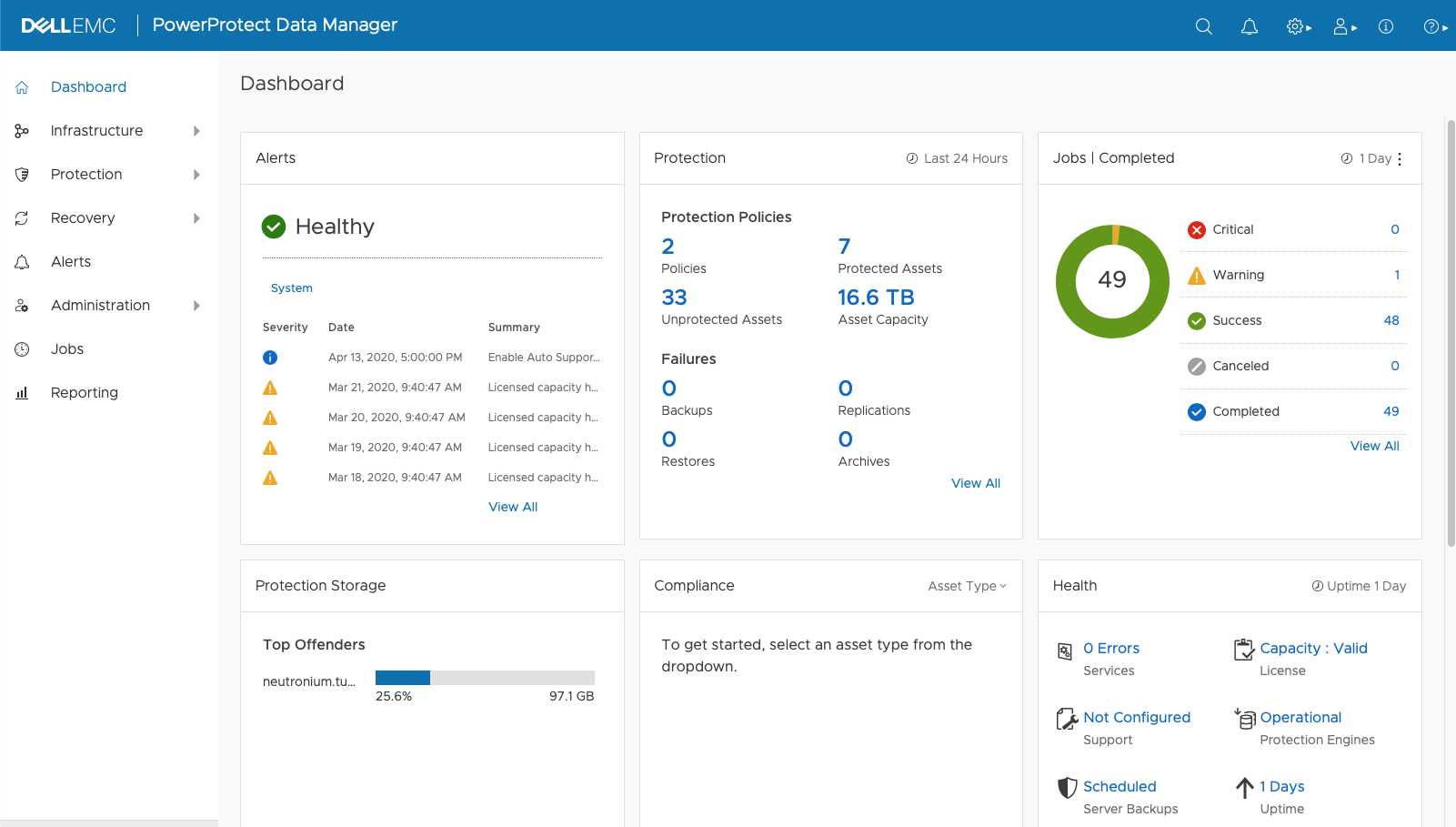

Last week saw the release of PowerProtect Data Manager (PPDM) 19.5. That’s the fifth release of PPDM since its inception,…

Last week saw the release of version 19.3 of Dell Data Protection Software – Avamar, NetWorker, Data Protection Advisor and…

We’ve just had our long, long weekend in Australia. Back in the days of tape libraries, there used to be…

PowerProtect Data Manager (PPDM) 19.2 came out a couple of weeks ago, and last week I blogged about some of…

It’s been a busy few weeks for Dell EMC on Data Protection product releases – you’d have thought the new…

NetWorker 9.2.1 has just been released, and the engineering and product management teams have delivered a great collection of features,…

I know, I know, it’s winter up there in the Northern Hemisphere, but NetWorker 9.1 is landing and given I’m in Australia, that…

NetWorker 9.0 SP1 (aka “9.0.1”) was released at the end of June. I meant to blog about it pretty much as soon as…