This is an adjunct post to the current series, “Data lifecycle management“, and is intended to provide a little more information about types of archiving that can be done.

When we literally talk about archiving (rather than tiering), there are two distinctly different processes in archival operations:

- Stub based archive – transparent to the end user

- Process archive – requires access changes by the end user

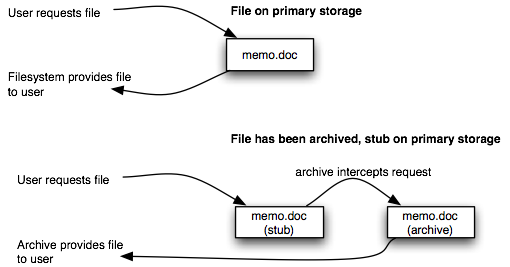

Stub based archive is an interesting beast. The entire notion is to effectively present a unified, unmodified view of the filesystem(s) to the end user such that data access continues as always, regardless of whether the file currently exists on primary storage, or has been archived. Conceptually, it resembles the following:

With a stub-based archive system, there is no apparent difference to the end user in accessing a file regardless of whether it still exists on primary storage or whether it’s been archived. When a file is archived, a stub, with the same name and extension, is left behind. The archive system sits between end-user processes and filesystem processes, and detects accesses to stubs. When a user accesses a stub, the archive process intercepts that read and returns the real file. At most, a user will notice a delay in the file access, depending on the speed of the archive storage. If the user subsequently writes to the file, the stub is replaced with the new version of the file, restarting the file usage process. Backup systems, when properly integrated with stub based archive, will backup the stub, rather than retrieve the entire file from archive.

Archive systems such as those described above allow for highly configurable archive policies – simple rules such as “files not accessed in 180 days will be archived”, as well as more complex rules, e.g., “Excel files not accessed in 365 days from finance users AND 180 days by management users will be archived”.

Stub based archiving is paradoxically best suited to large environments. Paradoxically because it has the potential to introduce a new headache for backup administrators: massively dense filesystems. For more information on dense filesystems, read “In-lab review of the impact of dense filesystems“. The stub issue is something I’ve touched on previously in “HSM implications for backup“.

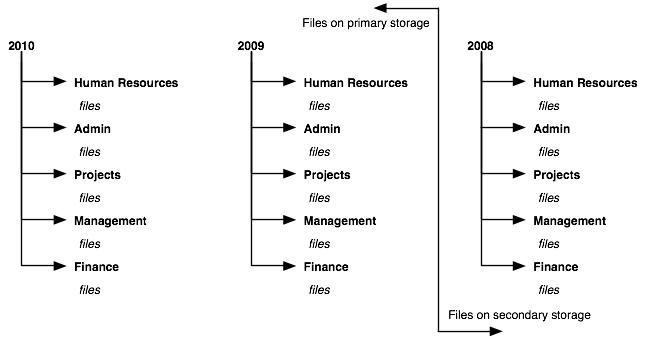

The other archive method is what I’d refer to as “process based archive”. This is used in a lot of smaller businesses, and centres around very simple archive policies where entire collections of data are stored in a formal hierarchy, and periodically archived – for instance:

In this scenario, filesystems are configured and data access rules are established such that users know data will either be in location A, or location B, based on the a simple rule – e.g., the date of the file. In this sense, data written to primary storage is written in a structure that allows whole-scale relocation of large portions of it as required. Using the example above, user data structures might be configured to be broken down by year. So rather than a single “human resources” directory on the fileserver, for instance, there would be one under a parent directory of 2010, one under a parent directory of 2009, etc. As data access becomes less common, the older year parent directories (with all their hierarchies) are either taken offline entirely or moved to slower storage – but regardless, receive “final” multiple archive style backups before being taken out of the backup regime entirely.

Irrespective of which archive process is used, the net result should be the same for backup operations – removing stagnant data from the daily backup cycle.

One thing you might want to ponder: is data storage tiering capable of fulfilling archive requirements? I would suggest at the moment that the jury is still out on this one. The primary purpose of data storage tiering is to move less frequently accessed data to slower and cheaper storage. That’s akin to archival operations, but unless it’s very closely integrated with the backup software and processes involved, it may not necessarily remove that lower-tiered data from the actual primary backup cycle. Unless the tiering integrates to that point, my personal opinion is that it is not really archive.

1 thought on “An Aside: Stub vs Process Archive”